AI innovation is accelerating rapidly, but the infrastructure required to run it at scale is struggling to keep pace, ultimately decelerating enterprises from operationalizing AI.

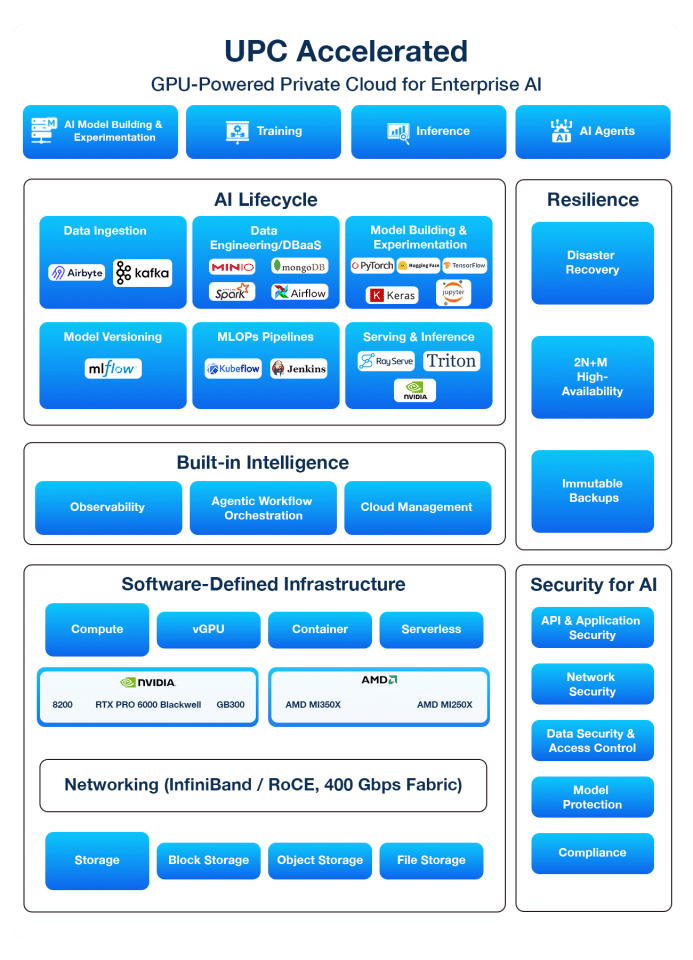

UPC Accelerated, our flagship GPU-powered private cloud, is designed from the ground up to deliver the speed, scale, and control required for the AI era – simplifying AI model experimentation, development, training, fine-tuning, inference, and deployment.

Enterprise AI infrastructure requires more than GPU availability. It demands a platform that integrates high-performance compute, resilient architecture, enterprise security, and developer-ready tooling. UPC Accelerated brings these capabilities together to power the entire AI lifecycle, from experimentation and training to large-scale inference.

By removing friction between data and deployment, UPC Accelerated simplifies how AI environments are built and operated. Its built-in observability layer and GenAI-powered cloud management stack continuously analyze compute utilization, performance, and costs, enabling real-time resource optimization without introducing additional tool sprawl.

The result is a platform that allows teams to move from prototype to production faster, with enterprise-grade assurance. Below are some of the key capabilities and benefits enterprises experience with UPC Accelerated:

As AI models grow in size and complexity, enterprises require infrastructure that can deliver consistent GPU performance, support distributed training, and handle real-time inference workloads at scale.

UPC Accelerated addresses these requirements with dedicated GPU clusters that eliminate resource contention commonly experienced in shared public cloud environments, ensuring predictable performance for AI workloads. Support for vGPUs and serverless compute enables flexible resource provisioning for different stages of the AI lifecycle.

Powered by the latest NVIDIA and AMD GPU accelerators, such as Blackwell and Hopper, with 400Gbps ultra-fast networking and AI optimized storage capable of 100,000 IOPs, the private cloud allows multi-node training and inference, without bottlenecks. An open and flexible ecosystem further allows teams to use preferred frameworks and tools, including PyTorch, TensorFlow, MLflow, Jupyter, and Docker, without vendor lock-in.

The result is faster model development cycles, scalable AI training and inference, and the performance consistency required to operationalize AI at enterprise scale.

While high-performance infrastructure is essential for AI training and inference, enterprises also need development environments that enable data scientists and ML engineers to iterate quickly and deploy models to production efficiently.

PC Accelerated supports this with a unified AI lifecycle toolchain with 100+ integration from data ingestion and engineering to model development, MLOps, and inference. Additionally, Kubernetes-native orchestration and self-service workflows allow teams to spin up environments, train models, and deploy applications without managing underlying infrastructure. Pre-built AI agents and agentic workflow orchestration further streamline the development by automating multi-step workflows.

The result is faster time-to-AI production and reduced integration complexity.

As AI workloads scale, managing distributed GPU infrastructure, monitoring system health, and optimizing resource utilization can quickly become operationally complex. Enterprises therefore require intelligent automation and deep observability to maintain reliability while controlling infrastructure costs.

UPC Accelerated addresses these challenges with built-in observability and AIOps capabilities that provide end-to-end monitoring across AI infrastructure. The platform continuously analyzes infrastructure telemetry to detect anomalies and trigger automated remediation workflows, accelerating incident detection and resolution while reducing operational overhead.

Embedded Gen AI capabilities further enhance operations by analyzing performance and usage patterns to generate insights, recommend optimizations, and simplify cloud management tasks. Real-time cost monitoring and usage analytics provide visibility into AI infrastructure spending, enabling teams to right-size GPU consumption and optimize resource utilization.

The result is simplified AI infrastructure operations, improved reliability, and greater control over AI infrastructure costs.

As AI systems increasingly power business-critical applications, enterprises must ensure that AI infrastructure meets requirements for resilience, security, compliance, and data sovereignty. Protecting proprietary datasets, safeguarding models, and ensuring operational continuity are essential when deploying AI in production environments.

UPC Accelerated addresses these requirements with resilient infrastructure designed for continuous AI operations – 2N+M redundancy, immutable backups, and built-in disaster recovery.

The platform also provides comprehensive security across APIs, networks, data, and AI models. Built-in support for 50+ compliance frameworks, such as GDPR, HIPAA, and more, along with auditability and governance controls enables organizations to deploy AI workloads confidently in regulated environments.

To further protect sensitive data and intellectual property, UPC Accelerated offers private execution environments, geo-fencing capabilities, and single-tenant isolation. These capabilities ensure datasets remain within approved jurisdictions while safeguarding proprietary models and AI pipelines from unauthorized access or external exposure.

The result is a secure, resilient, and compliant AI infrastructure that enables enterprises to confidently operationalize AI at scale.

Beyond performance, productivity, and governance benefits, UPC Accelerated also delivers significant cost advantages. GPU-accelerated AI in public clouds often introduces opaque pricing, egress fees, and premium instance costs that escalate as training and inference workloads scale.

By contrast, private cloud environments like UPC Accelerated provide predictable pricing and dedicated performance. With isolated GPU clusters, private networking, and optimized resource utilization, organizations can run large-scale AI workloads more efficiently, achieving 30–40% lower total cost of ownership compared to hyperscaler environments.

This combination of performance, control, and cost predictability enables enterprises to confidently move AI workloads from experimentation to production at scale, driving a paradigm shift toward AI acceleration platforms like UPC Accelerated

To discover how UPC Accelerated can accelerate your AI journey, book a meeting with me.

ramkiramani@prestage.unitedlayer.com